Schools regularly point to learning theory as justification for instructional practices, but the way those theories are used in classrooms rarely reflects how learning actually occurs for students. The gap is not about teachers misunderstanding theory, but about schools attempting to layer multiple theories at once without creating the conditions that make any of them effective. What results is a system that looks theoretical on paper but functions as compliance in practice.

What Schools Think They’re Doing

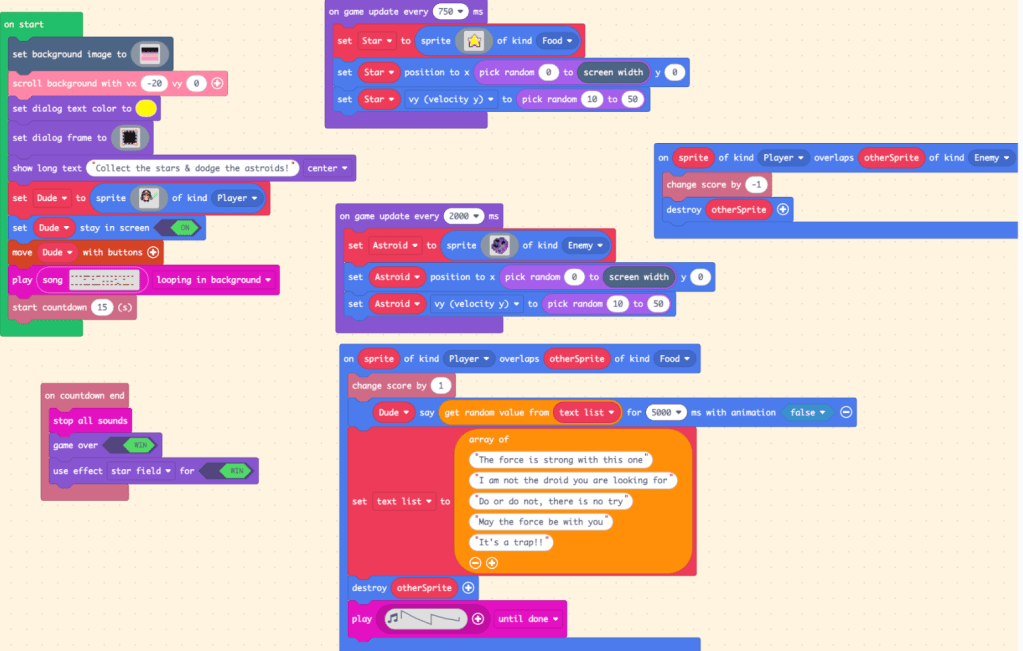

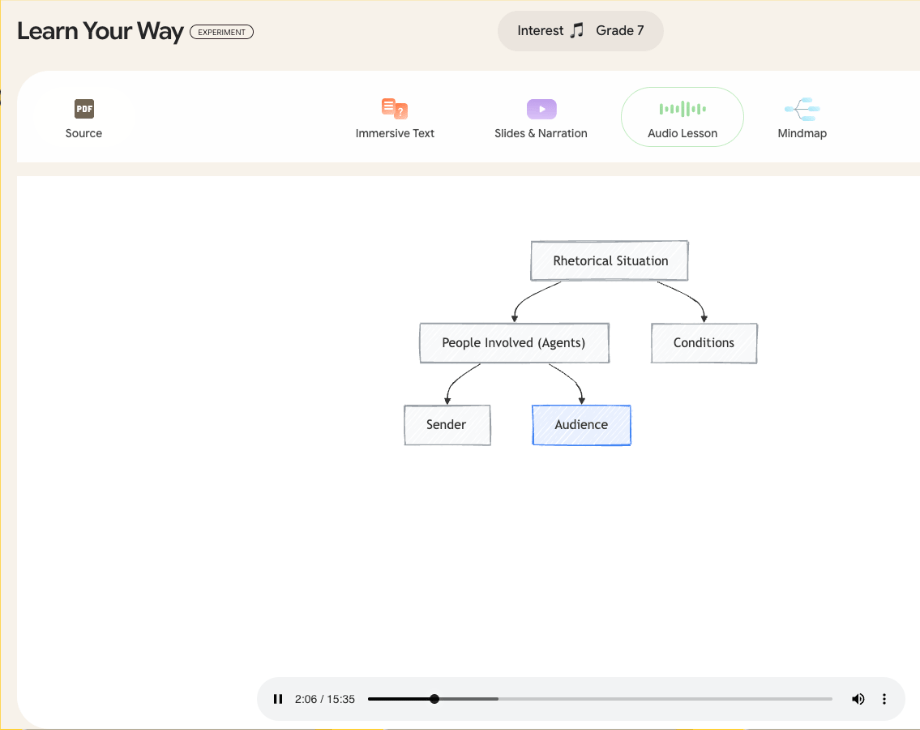

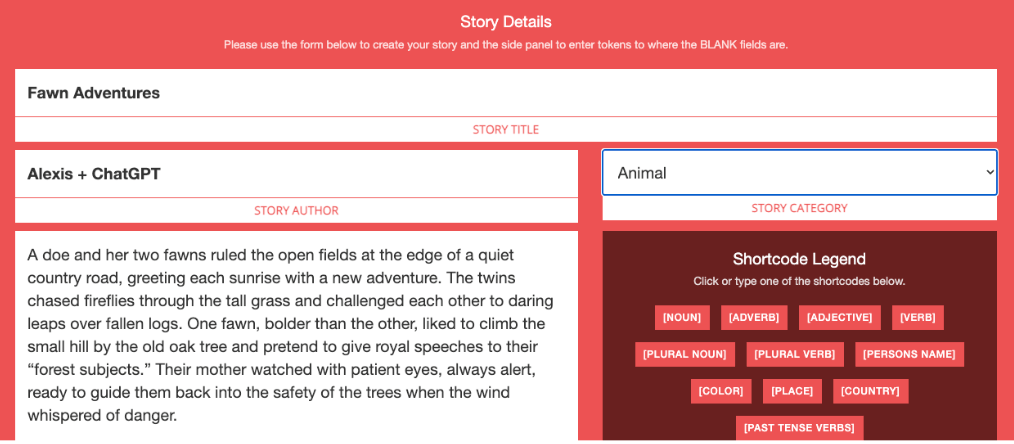

Schools believe they are implementing behaviorism, cognitivism, and social learning to support growth. Attention getters are treated as classical conditioning, assumed to cue instant silence (McLeod, 2024). Write-ups are framed as operant conditioning, intended to change behavior through consequence (Cherry, 2024). Scripted curriculum is marketed as schema-building, connected to cognitivist ideas about sequencing knowledge so it encodes into memory (Putnam and Borko, 2000). Whole-group lessons are described as constructivist because students are “given” knowledge before applying it. Manipulatives are displayed as constructionism, even though most activities are teacher directed reproductions rather than student created models. Schools also reference Vygotsky’s Zone of Proximal Development to justify grouping and proximity support (Vygotsky, 1979), and they call group work collaboration, assuming it reflects social learning. The language is correct, but the implementation is shallow. These strategies gesture toward theory instead of embodying it.

What Actually Happens in Classrooms

In practice, these strategies break down quickly. Many students repeat the attention getter but keep talking, showing there is no conditioned behavioral shift. Write-ups become documentation rather than reinforcement because there is rarely a meaningful consequence attached. Scripted curriculum forces teachers to cover content rather than connect it, and they are blamed when students fail to meet benchmarks despite “following the program.” Whole-group instruction widens learning gaps in classrooms where readiness levels stretch across several grade levels. Manipulatives become compliance tools instead of thinking tools, used to produce the teacher’s predetermined answer. Group work is often one student doing the writing while the others stay passive. Real learning happens, but it happens around the system, not because of it.

The Assessment Mismatch

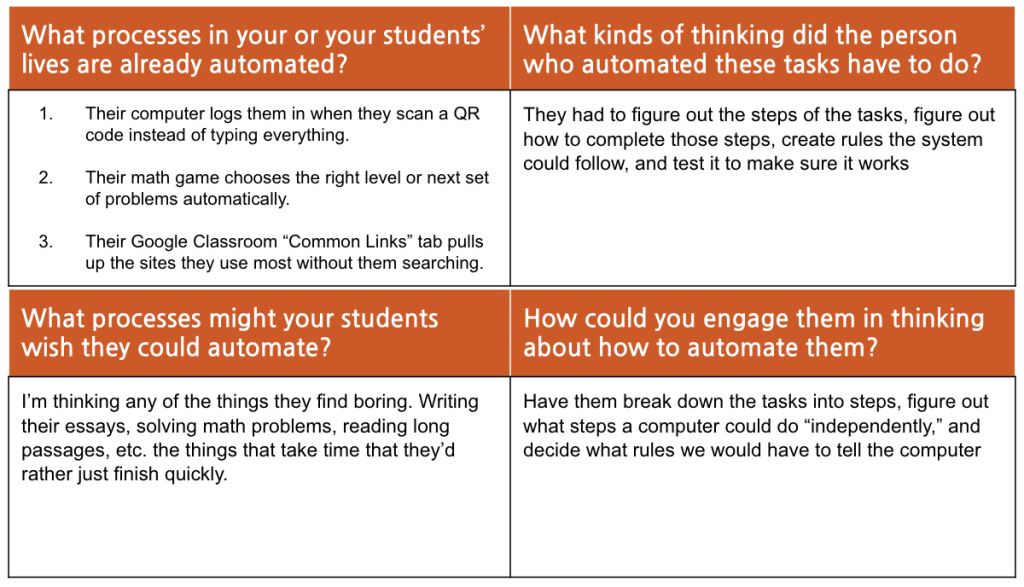

The biggest problem with assessment in school is that scores end up mattering more than the actual learning behind them. Students memorize information just long enough to retrieve it, but because it is only stored in short-term memory and never linked to prior knowledge, there is no real schema to connect it to. They are not building understanding but rehearsing. Cognitivism shows that learning sticks when encoding leads to meaningful retrieval (Putnam and Borko, 2000), but the pace of curriculum prevents students from ever getting there. In data meetings, the conversation is whether numbers moved, not whether thinking deepened. Students may never hear the meeting, but they feel its impact when instruction is rushed and curiosity is treated as a distraction. High-performing students learn their value lies in staying ahead, while struggling students learn they are permanently behind. Instruction becomes about surviving the pacing map instead of working within a child’s actual ZPD. The test becomes the finish line and the number becomes the identity, which is the opposite of what learning is supposed to be.

Where Real Learning Actually Happens

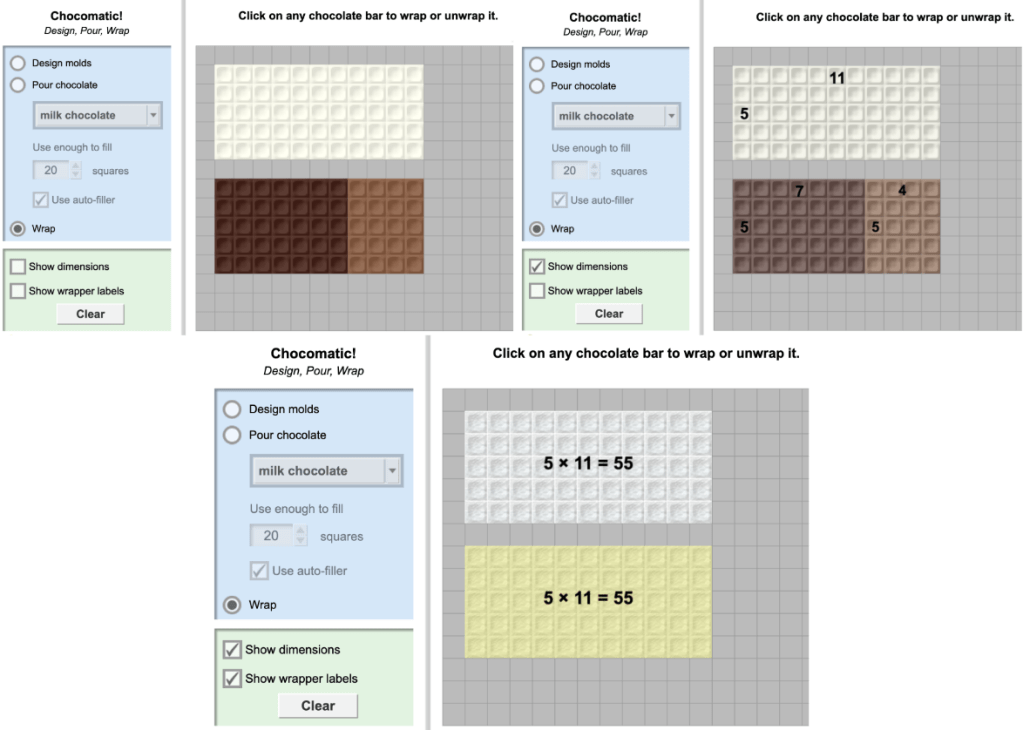

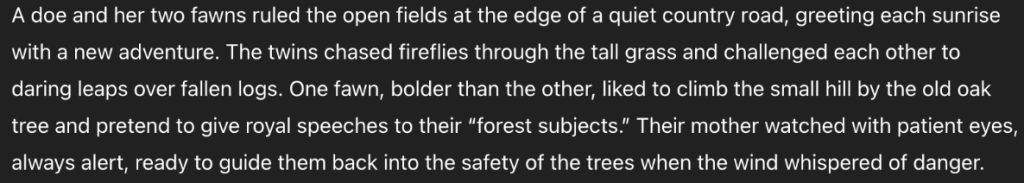

Real learning shows up when students can participate, observe, and make meaning in context. It appears when students learn from peers through modeling, which reflects observational learning (Bandura, 1971). It happens when one student becomes a more knowledgeable other for a classmate through natural apprenticeship instead of teacher assignment. It surfaces when students engage with their environment and the learning is situative rather than scripted. One example is when my class went outside and built arrays using wood chips, sticks, and leaves. The content was identical, but the change in environment transformed their thinking. Students were testing, revising, troubleshooting, and explaining. They were immersed in a community of practice rather than performing understanding for a worksheet. The motivation came from relevance and participation, not reinforcement charts.

The Big Claim

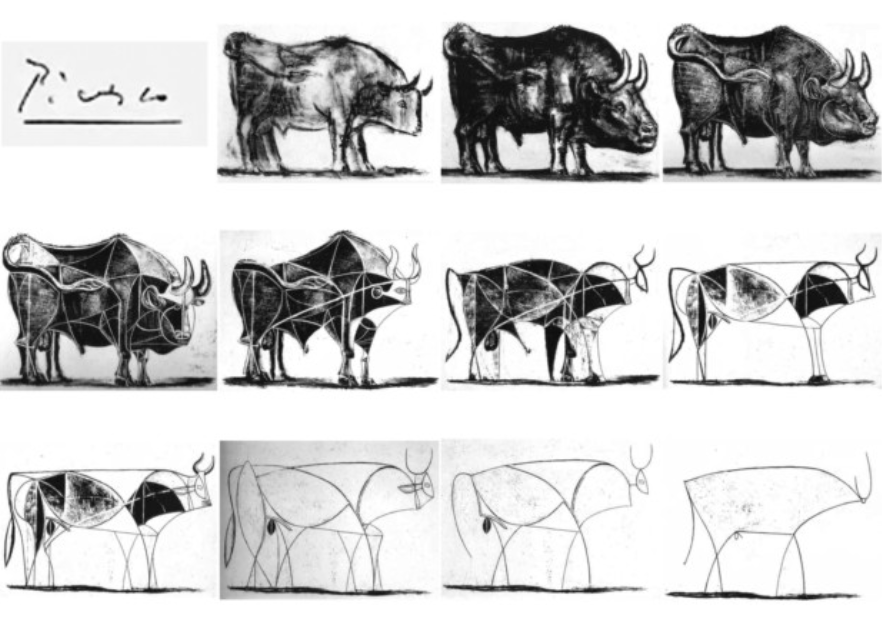

What school delivers most of the time is education, not learning. Education is passive, curriculum centered, and driven by extrinsic performance goals. Learning is active, curiosity driven, and rooted in intrinsic motivation. When instruction serves pacing rather than understanding, students perform knowledge instead of developing it. Curiosity becomes something to “fit in later” instead of something to build from. The most meaningful academic moments are often the ones that stray from the script, when student questions lead to investigation, connection making, and discovery. Those are the moments where students are not being educated but are becoming thinkers.

Reframing What School Could Be

If the real focus of school were learning instead of assessment, classrooms would function differently. Students would have agency in the questions being explored. Apprenticeship and peer modeling would be normalized rather than incidental. Scaffolding would be responsive instead of standardized. Classrooms would operate as communities of practice, where ideas are built, tested, and revised, not rehearsed for a score. Assessment could support learning if it measured participation, transfer, and growth within authentic activity, aligned with situated learning principles (Lave and Wenger, 1991). In a school built around learning, students would not perform understanding to prove mastery. They would interact with ideas until mastery becomes visible on its own.

References

Bandura, A. (1971). Social learning theory (Vol. 1). General Learning Press.

Cherry, K. (2024, July 10). Operant conditioning in psychology: Why being rewarded or punished affects how you behave. Verywell Mind.

McLeod, S. (2024, February 1). Classical conditioning: How it works with examples. Simple Psychology.

Putnam, R. T., and Borko, H. (2000). What do new views of knowledge and thinking have to say about research on teacher learning? Educational Researcher, 29(1), 4 to 15.

Vygotsky, L. S. (1979). Consciousness as a problem in the psychology of behavior. Russian Social Science Review, 20(4), 47 to 79.

Lave, J., and Wenger, E. (1991). Situated learning: Legitimate peripheral participation. Cambridge University Press.