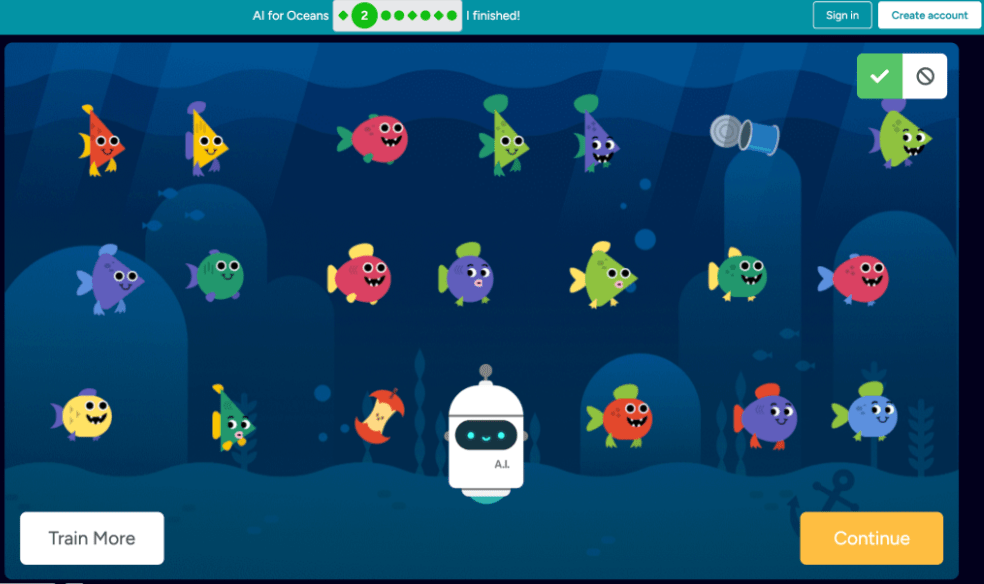

This week, I experimented with training a simple AI model using Code.org’s Oceans activity.

The task seemed straightforward. Train the AI to recognize fish based on the examples I provided. I felt confident I had trained it well. And yet it still allowed an apple into the fish category.

That moment was eye-opening.

It forced me to reconsider what I thought I had clearly defined. If the AI misclassified an apple, was the problem with the algorithm or with my training data? I began to see how critical it is to provide diverse and precise examples when training a model.

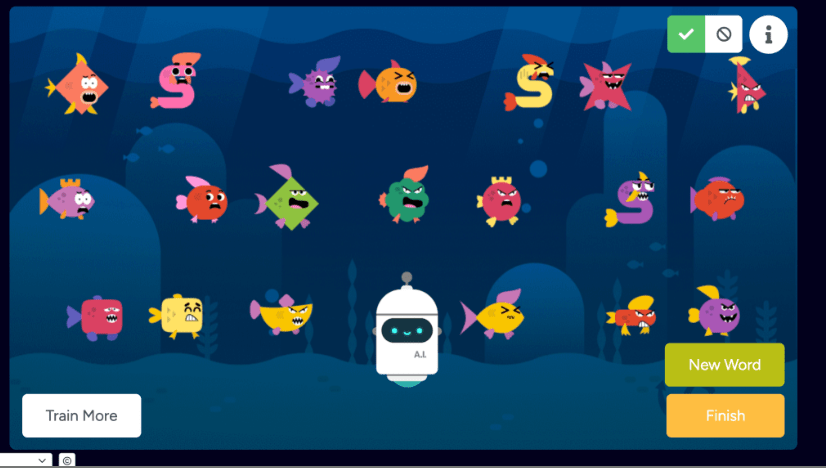

Another challenge was defining what constitutes a “glitchy fish.” That label was subjective. What I considered glitchy might not match the patterns the AI was identifying. I eventually shifted to labeling fish as “angry,” but even that felt opinion-based.

This activity highlighted an important point: AI does not understand context the way humans do. It detects patterns based on the data it is given. If the training data is incomplete or biased, the results will reflect that.

Training AI requires more than technical steps. It requires intentional decision-making and awareness of bias.

And sometimes, even when you think you trained it well, an apple still sneaks through.

Leave a comment